Off Grid CTO: Off Grid Power Efficient AI

Off Grid CTO: An AI Solution With Low Power

Welcome to the next edition of my blog about living off the grid, while working as the CTO of ModelOp, a great software company that allows people to work from wherever they happen to be. We are hiring, so if you want to work for a great company on the leading edge of the AI space, see our website for current opportunities.

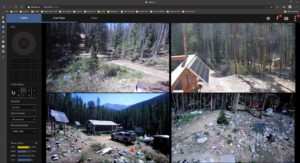

One of the challenges of being so remote is when you are away, it is nice to be able to check in on the place and see that things are still working OK. I have the ability to check on the battery charge stats from anywhere in the world, see the current weather, and get a few views of my property from different solar powered security cameras scattered about the property.

This comes in really handy to know how much snow there is to make a trip to shovel the roof, if we can drive in with a regular vehicle yet, or just to see the various animal visitors we get that we may not even notice when we are here.

So we get alerts on motion detection, but every time there was a windy day, or a moth flies by, it would result in an alert. There had to be something better and a way to fix this. Sure enough, machine learning based object detection would solve this, but compute requirements on low power are a big issue.

I will be talking about some products I used to solve this problem, but I do not receive any compensation for these items. They are just what I myself bought and used to solve the problem at hand.

“Some people worry that artificial intelligence will make us feel inferior, but then, anybody in his right mind should have an inferiority complex every time he looks at a flower.”

—Alan Kay

The Core Stack

To actually run the security cameras, I use a variety of standard IP cameras that connect either through ethernet cable, if attached to the cabin, or through wifi if more remote. To actually provide the remote monitoring, motion detection, and a convenient web based UI, I use the popular software Blue Iris. Unfortunately it only runs on Windows, but its capabilities are superior to any of the other Linux based solutions I tried out.

So to actually run the software itself, I use a low powered fanless mini pc that has enough power to run the camera software, and not much else. It uses very little power, so can be run continuously without fear even on cloudier days.

This, however, means it does not have much extra compute power to handle anything else. Blue Iris recently added support for the open source Deepstack object detection software, but there was no way I could run that on the same machine. It just demanded way too many compute resources, and really needs something with a decent GPU.

I considered upgrading the PC, but anything that had enough juice to handle it, also wanted to suck up way too much power for the task at hand. I needed a low power solution that could handle this.

Meet the Jetson

So I needed something more like the raspberry pi, but could handle an AI load that relied on GPU resources to actually accelerate the compute. Object detection for images in real time requires some muscle. Now fortunately we only need to do the object detection when motion is detected, rather than on a live video stream, so that helps reduce the requirements a bit.

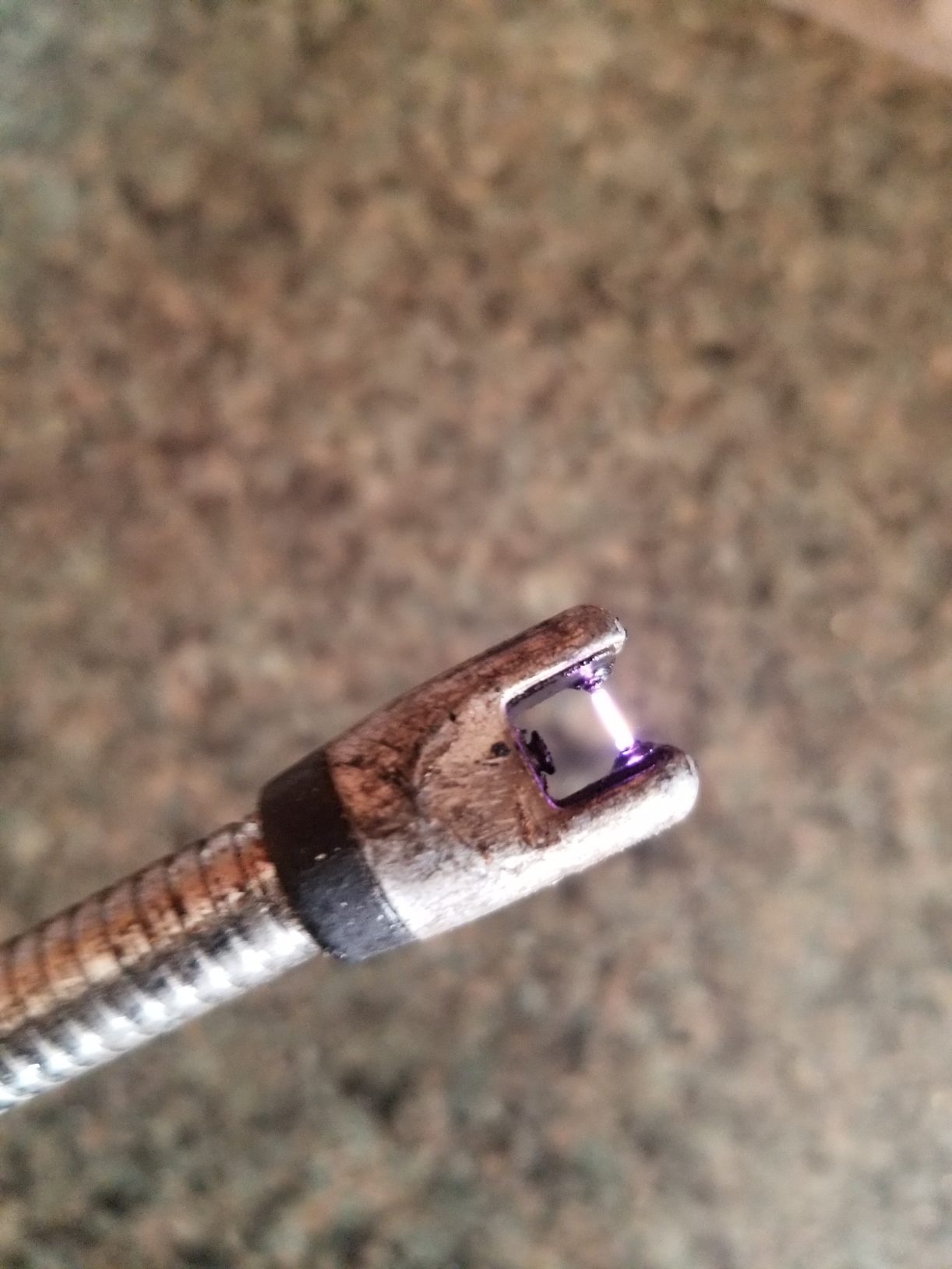

In steps the nVidia Jetson. It is a small unit with a GPU that runs on 5v, and approximately 10w max at normal consumption. I do not use the usb for power, but instead provide up to 4A of power through the barrel connector for higher performance.

It also has 128-core Maxwell GPU, as well as a Quad-core ARM A57 @ 1.43 GHz paired with 4gb of memory meaning it has the ability to handle some decent load. The base linux software it has (jetpack) is already CUDA enabled and ready to go. So this sure seemed like a great solution for image processing at low power. But what do we run on it?

“The sad thing about artificial intelligence is that it lacks artifice and therefore intelligence.”

—Jean Baudrillard

“Before we work on artificial intelligence why don’t we do something about natural stupidity?”

—Steve Polyak

Deepstack for Jetson

Well the nano itself provides a CUDA enabled linux distribution, so the next step was to place something on it to do the image recognition. Fortunately, Blue Iris already provides direct integration with Deepstack, an open source image recognition platform. It is simple REST style API, so it is really very easy to use, and also includes python support.

Deepstack is a dockerized AI solution utilizing standard libraries under the covers to perform the work. It can do a large variety of detection types, but for our purposes, we wanted to just do object detection, not scene nor face. It has an install specific to the Jetson, so you simply first install docker, then launch a docker instance with the Deepstack server already installed. Then it will listen on the configured port for any requests, and send back the coordinates of detected objects along with the label.

For standard scene type images on a high detection setting (most detailed) it takes approximately 300ms to resolve, so not bad on 10w of power.

And I always get this question so will go ahead and answer it. Yes, it does detect hot dogs….

Getting the Two to Talk

So the final step in setting all of this up was to fix the Jetson on a static IP, and configure Blue Iris to talk to that instance of it. This is easily done in the global config settings under the AI tab. Simply put in the url to your Jetson, and a list of objects (from the list in the Deepstack SDK) you want it to detect.

Then on each camera, under the ‘Motion Trigger’ tab, simple click the Artifical Intelligence button and list out the objects it should look for, or reject. I also tick the checkbox to draw the labels and boxes.

Because I no longer needed to worry about false alarms from the motion detection being too sensitive, I really cranked up the sensitivity of the motion detection. What happens is the motion detection will trigger an image to be taken, then Blue Iris will send that to the Jetson for processing, and it will only alert you if it finds matching objects in the image.

So I went from getting an alert every 15 minutes in a windstorm or heavy snow, to now receiving few, if any false alerts due to this post filtering. A great improvement!

“The question of whether a computer can think is no more interesting than the question of whether a submarine can swim.”

―

“People worry that computers will get too smart and take over the world, but the real problem is that they’re too stupid and they’ve already taken over the world.”

―

The Results are In

Well after I got the pair up and running, I have to say the results have been quite an improvement. Instead of getting a picture of a bird flying by, or shadows moving due to the wind, or a whole host of other things, I now found all of these being filtered out. With a little bit of tuning of the minimum confidence level, I found it to detect the things I cared about quite reliably.

As can be seen on the left, it was able to pick me out as a person in a variety of distances and orientations quite successfully. It also has detected cars and such without any problems.

So overall, by only adding 10w to my system requirements, this has been a very successful implementation. I am interested in trying out the gpu capabilities for other tasks as well, and seeing how well it performs. This thing is really a low powered powerhouse….

Some Improvements

Overall it works quite well out of the box, and captures most of what I care about. There are, however, some missing objects I would like to add to its database.

Fortunately, Deepstack does allow and provide tools for training on custom objects. The issue for me will be to come up with enough labeled data to support this training. For instance it sees a moose as either a cow or a dog, depending on the angle. It also sees my UTV as a truck, which is close, but it would be nice to get a different message for each. There are also a lot of animals unique to high altitude that it is not aware of, so it would also be nice to get an alert telling me there is a marmot on the deck. So in the long run, I have some training to do at some point.

Overall though, the project was a great success and a good learning experience. It shows that off the grid ai is not only possible, but available easily today. There are probably many other uses I can come up with over time, so it will be a great experimentation platform.

Thanks for joining me in this edition of the Off Grid CTO, and I look forward to our next edition!

“What people call #AI is no more than using correlation to find answers to questions we know to ask. Real #AI has awareness of causality, leading to answering questions we haven’t dreamed of yet.”

– Tom Golway